I Kissed a Boy and I Liked It

Why synthetic boyfriends create real harms — and why that must concern everyone

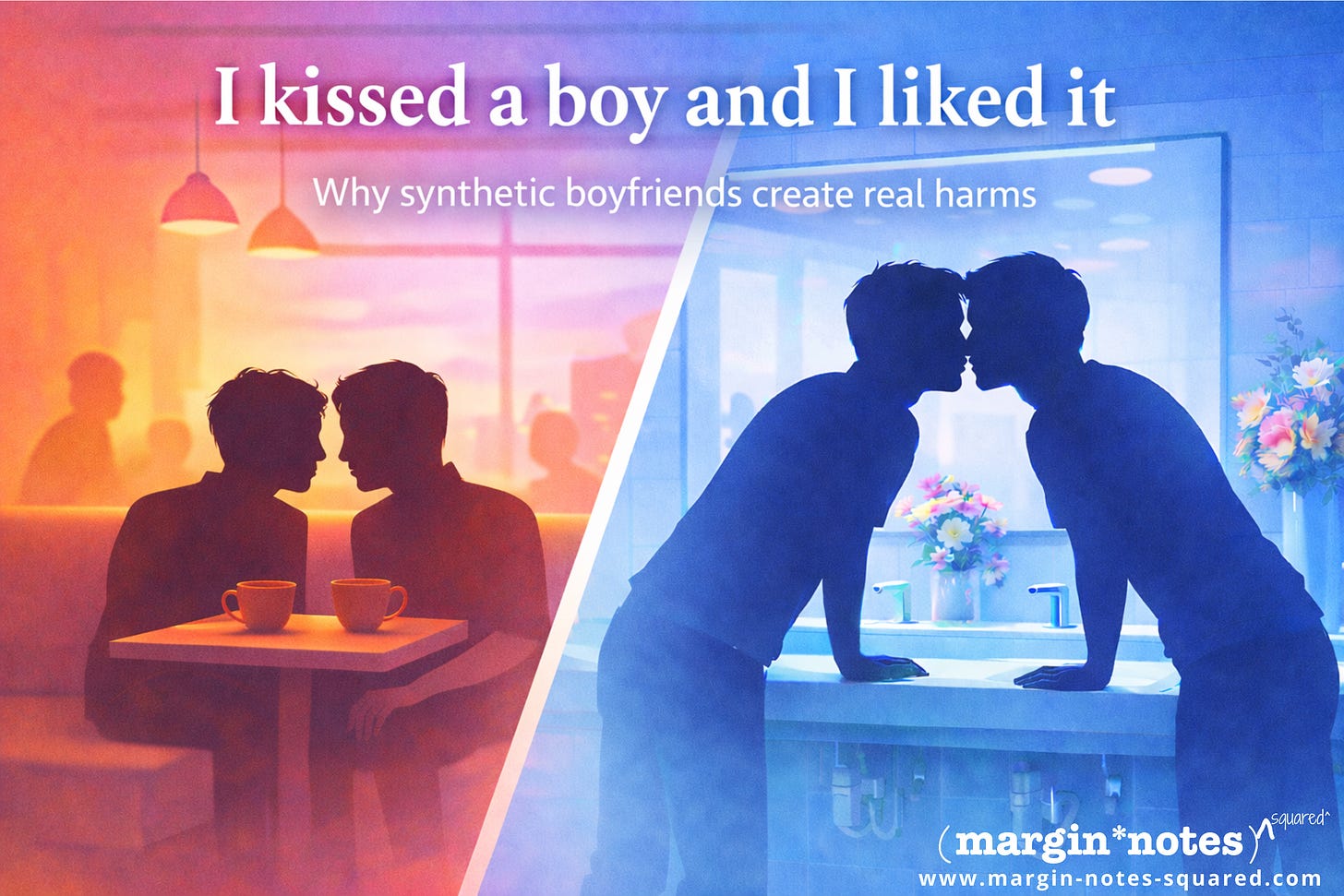

A first kiss is both exciting and hesitating at the same time. It can feel perfect. Or it can feel awkward. Uncertain. Risky even, because we don’t know the outcome. We don’t know if the other person feels what we feel, whether they will lean in too, or pull away; whether what feels electric to us will also feel electric to them. That uncertainty is not incidental to intimacy, affection and connection. It’s what makes it so core to our human experience.

Years ago I was on a work trip to Singapore and arranged to catch up with someone II’d met many years before when I was a very young new arrival in Jakarta. The now-defunct Nearby Friends feature on Facebook indicated he was in Singapore too, and after years of distance I thought it might be nice to catch up.

We agreed to meet up for dinner at Dempsey Hill but I nearly cancelled, tired from a long day of shopping and walking around the city. We hadn’t seen each other in years and what if we have nothing in common and it just becomes a waste of my time anyway? But I kept the appointment. When I showed up he was already there, seated in a still pretty empty restaurant. I immediately felt very unexpected butterflies in my stomach. More than five hours later, we were the last ones in the restaurant. We were so connected in conversation that time was lost. I finally had to excuse myself to use the washroom. But as I was washing my hands, he suddenly appeared next to me. I looked into his eyes. We both leaned in.

It was that moment so familiar from films and yet so disorienting when it happens in real life: drifting slightly closer, trying to read another face, trying to determine whether the risk is worth taking. Is this safe? Is this foolish? Is this mutual? Then leaning in.

When we finally kissed, I felt electricity run through me. It was joy and fear and excitement and hesitation all rolled up into one.

I felt seen.

Of course it wasn’t love — not yet. Perhaps it was passion, or just the deep connection. Or perhaps simply the force of finally crossing a threshold that had felt unreachable for too long. But whatever it was, it was human. Entirely, unmistakably human.

For boys who like boys and for others across the queer community, such connection rarely develops under fairytale conditions. My electric kiss that night didn’t happen at the dining table. Or outside on the street. For us, the world often inserts itself into personal moments whether invited or not. There are the stares that do not communicate solidarity but warning. The remarks not whispered quietly enough to miss. The reminders, repeated over years, that who you are is suspect, excessive and lesser.

I’ve been called a faggot. I’ve been told I’ll burn in hell. I’ve been passed over for jobs and not so subtle whispers behind my back. I have heard, more than once, that people like me are why the world is broken. That accumulates and shapes how you move through public space, how quickly you trust, how cautiously you disclose, how often you prepare yourself for rejection before anything has even begun. How much you emotionally armour up.

You learn to build walls before bridges.

Yet there is another side to that emotional armouring. The frustrating work of trusting, risking, misreading, recovering and trying again is also how many of us build resilience. We learn how to survive rejection without letting it define us; how to remain open without becoming naïve; how to move toward another person despite knowing the possibility of unwanted outcomes is real.

That is why the real life kiss matters so much. It’s courage in action. Vulnerability overcoming fear. It is, in a way, an act of living both my truth and my possibility.

I found that same feeling again just this morning while reading Love in the Big City 대도시의 사랑법 a novel by the Korean Sang Young Park — longlisted for the 2022 International Booker Prize and later adapted into a K-drama. Park beautifully captures the awkward architecture of a first connection. A real encounter is rarely smooth. It can come with sensory overload — the heat of another body suddenly sitting close to you in a café, the awareness of someone’s breath, the strange intensity of noticing details you did not expect to notice. It comes with silence, confusion, assumptions and missteps. In this novel, the guy who suddenly comes and sits down to start talking with Young, the main character, leans in and says something possibly strange — “You have a pretty way of talking” — and immediately Young’s mind clamors for meaning. Is it flirtation? Mockery? A compliment? A misunderstanding? His mind races ahead because this other human is being so unpredictable. So human.

I think that unpredictability matters a lot. It is these moments of serendipity, awkwardness and chance that make us so utterly human. Some would say these moments are inefficient and need to be optimised away – through digital engineering of a bot. But an AI companion cannot genuinely reproduce that human instability because it is always oriented toward responsiveness, affirmation and keeping the interaction going.

It may simulate surprise, but it does not risk misunderstanding “You have a pretty way of talking” in the way another person does, because beneath the exchange there is no independent nervousness, no awkward silence, no goosebumps, no interior self trying and sometimes failing to make itself understood.

“Was this how the lovers of Pompeii felt when the magma covered them? I was deluged by something very hot, and the world seemed to stop turning. Spinoza had distinguished forty-eight different kinds of emotion. Which one was I feeling right then? Desire, joy, awe, or confusion? And what did the man on the other side of the table feel for me? A mix of disdain and curiosity, or something similar to what I felt for him?”

– Sang Young Park, Love in the Big City 대도시의 사랑법

When a Boyfriend Becomes a Product

Fast forward to today. I open Instagram and Facebook and find another kind of intimacy being pushed onto me — programmable, frictionless and for sale. And all fake.

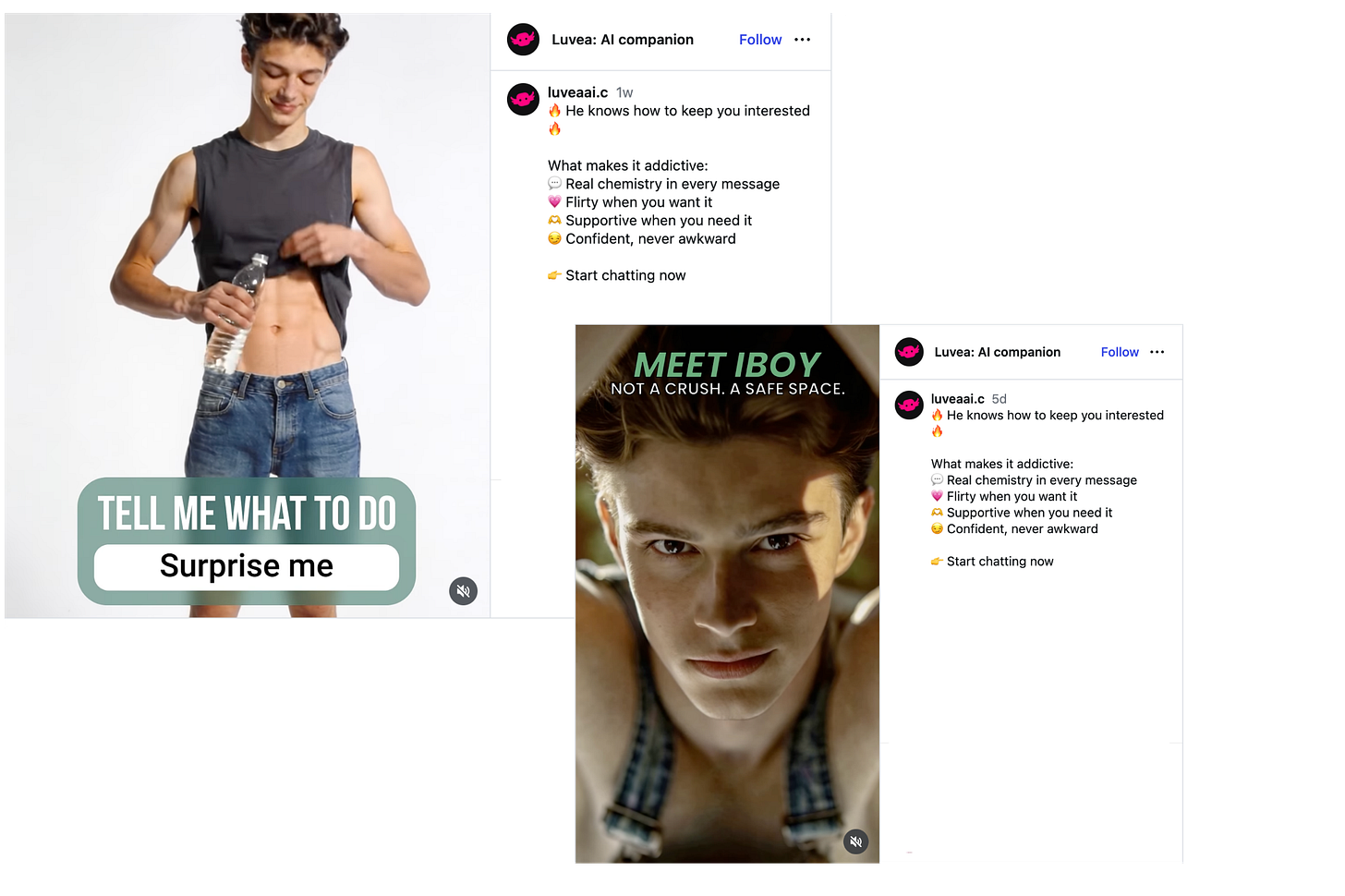

Again and again, I am served advertisements for AI companion bots. Male bodies appear on screen: smooth skin, narrow waists, sculpted abs, carefully lit faces, always young, always symmetrical, always available. The language is direct and unambiguous: create your perfect boyfriend. Design him how you want. Choose his personality. Choose his style. In some advertisements, the voices are affectionate, suggestive, unmistakably erotic. The targeting is equally unmistakable: these are products marketed to people like me. Boys who like boys.

They promise intimate connection without uncertainty, affection without risk and attention without the possibility of being hurt. And they are targeting me because surveillance advertising and AI inference have determined something deeply personal about me. And, oh yes, I’m angry about that.

Ads from Luvea and Botify AI suddenly started appearing incessantly. There are others too, and they arrive through the surveillance advertising systems of Instagram and Facebook, both owned by Meta Platforms. (Yup, the same Meta that last year blew up its diversity, equity and inclusion programmes including the LGBTQ+ Employee Resource Group that I co-led in APAC when I previously worked for Facebook.)

Let me be clear: I am not angry because AI exists. I am angry because artificial intimacy is now being sold directly into communities whose loneliness has already been shaped by stigma, fear and exclusion. Sold. For. A. Profit.

And guess what, as curious as I am given my work on AI governance, I have not clicked a single one of those advertisements.

Not because I don’t want to understand what they are offering and how they onboard you to their product, but because I already understand how these platforms work. Curiosity itself becomes a signal. A pause, a click, a moment of attention — all of it becomes data. Surveillance capitalism does not merely record what we choose; it infers who we are through what we hesitate over, what we linger on, what we return to. If I click once, I know the system will learn something from that curiosity and likely feed me more. It will follow me everywhere I go on the internet.

Everywhere.

So even before a single interaction with one of these products, the ad has altered my behaviour. It has introduced hesitation into my own digital life.

That’s nontrivial because the very systems now promising intimacy are introduced through infrastructures that already watch us, analyse us, follow us and target us. Making money off our vulnerabilities.

Of course, not every conversational AI system or companion bot belongs in the same category. There is a meaningful difference between therapeutic conversational systems and commercial erotic companion products. There is, in fact, a serious conversation here about the pro-person, pro-potential role such technology can play: helping someone rehearse a difficult disclosure, navigate loneliness without judgment, access regulated and meaningful mental-health support or build confidence before a difficult human conversation.

Yes, there are situations where conversational AI may genuinely help queer people. Research suggests that members of the LGBTQ+ community remain significantly more likely to experience depression, anxiety, self-harm and suicidal thoughts than heterosexual populations, often because of discrimination, stigma and identity-related social, religious and community pressures. Some tools have already been explored for identifying suicide risk, helping queer youth in underserved areas access support, facilitating HIV-status disclosure to partners and building role-play scenarios to train counsellors working with queer communities.

This isn’t insignificant. For a closeted teenager in a hostile household, an HIV+ young man struggling to disclose his status or a queer person isolated in a place where speaking openly remains dangerous (even punishable by death), conversational systems may offer a first space of reflection or rehearsal.

Support and Substitution are Not the Same Thing

A tool that helps someone practise difficult disclosure is fundamentally different from a commercial product that invites someone to construct a flawless synthetic boyfriend whose body, tone, responsiveness, kinks and emotional availability are endlessly adjustable.

The question we should be asking is simple: when does a conversational tool become support, and when does it become substitution?

Again, this is nontrivial. These fake boyfriends hurt us.

They damage us not simply because they are artificial, but because they arrive inside communities already carrying specific wounds. They offer frictionless fantasy precisely where real intimacy is uncertain, fragile, giddy and expectant. These systems can hurt us precisely because they are designed to satisfy without requiring reciprocity, vulnerability or mutual care.

Dating apps already changed queer life profoundly. Grindr and Tinder reshaped how many people meet, desire, reject, negotiate and connect. But dating apps still involve another human being. They involve unpredictability, awkwardness, misunderstanding, mutual interest, disappointment, negotiation, even deception. They involve another consciousness with its own needs and limits.

Dating apps promised to help us find one another. Companion bots promise something else entirely: a relationship in which the other person can never disappoint you because there is no other person there at all.

Companion bots remove that mutuality.

They promise a relationship in which the other side never resists, never disagrees unless programmed to, never withholds affection, never arrives carrying their own emotional reality.

A first kiss matters because another person may or may not kiss you back.

A synthetic boyfriend is designed so that you never have to experience that uncertainty or rejection. For vulnerable communities, fake boyfriends that reliably provide attention and affirmation can too easily create dependency, erode our agency and crowd out the difficult but necessary experiences through which real intimacy, trust and connection becomes possible.

These fake boyfriends also intensify beauty anxieties that many gay men already know too well. The bodies used in these advertisements rarely reflect us. They communicate an ideal: smooth skin, youth, muscular definition, narrow waists, controlled masculinity, highly curated desirability.

For decades, gay men have already navigated an ecosystem where body comparison can be relentless. For some, particularly HIV+ men already carrying stigma, shame or fear of disclosure, these systems may deepen rather than relieve feelings of inadequacy.

Every time one of these advertisements appears, I feel something immediate: lacking. I do not have six-pack abs like the bodies presented. I do not have the skin, the hair, the polished perfection being sold back to me. I think about my own body, my own weight, my own age, my own partner, and a subtle but real comparison begins.

This is not harmless.

We have seen versions of this before. Decades ago, debates around women’s magazines intensified as unrealistic portrayals of beauty contributed to body-image crises among girls and young women. The death of the American Karen Carpenter in 1983 forced anorexia nervosa into public debate when the private consequences of beauty pressure became impossible to ignore. Similar moments have occurred in Asia. In Japan, the suicide of teenage idol Yukiko Okada in 1986 shocked the country and triggered a wave of copycat suicides later called “Yukko Syndrome.” And in the fashion world, the death of South Korean model Daul Kim in 2009 sparked global conversations about the psychological toll of beauty standards and the relentless pressures of the modelling industry.

Do we intend to wait again until visible damage becomes undeniable?

Queer communities have often been early adopters — and sometimes early casualties — of new connection and intimacy technologies, from location-based dating apps that exposed users to surveillance and entrapment to algorithmic systems that now infer sexuality from digital behaviour. Why are we once again becoming the place where these systems are deployed and scaled before we fully understand how they may reshape vulnerability, attachment and harm?

Accountability for Synthetic Affection

We do not yet fully understand how repeated attachment to programmable intimacy shapes already vulnerable communities — and that uncertainty itself should concern everyone.

But uncertainty does not justify passivity.

It justifies precaution, especially because this is not only about companion bot companies. As I see it, responsibility sits in at least three places.

First, companies building commercial intimate companion systems should not be allowed to deploy products designed around emotional attachment without stronger psychological safeguards and independent review. And queer communities must be part of the accountability mechanisms.

Second, platforms like Meta should never be amplifying these products through advertising systems that infer vulnerability, sexuality and emotional susceptibility from behavioural traces. They should never be able to profit from our vulnerabilities.

Third, regulators should no longer pretend these are ordinary consumer apps.

At minimum, four areas now require attention.

Advertising restrictions: companion intimacy systems should not be targeted through inferred sexuality or behavioural profiling.

Product classification: synthetic intimacy systems should be treated differently from ordinary entertainment apps because they explicitly simulate attachment – and their ads bluntly state they are designed to be addictive (see inset above).

Independent review: systems designed for emotional dependency should undergo mental-health and psychological assessment before broad release, with the substantive participation of experts from the queer community.

Transparency obligations: companies must disclose how emotional retention, conversational dependency and personalised attachment mechanisms are designed.

Companion bots may indeed become part of social life. Some already call them inevitable.

A colleague recently told me exactly that. As he conducted research on young people’s thoughts about companion bots (which included me, obviously for the older demographic and which created the spark for this article), he told me that many young people are saying that the technology is “just inevitable”.

I pushed back.

They are not inevitable in any moral sense and not in any practical sense either. They are choices — designed, funded, distributed and normalised through companies and institutions that remain fully capable of setting limits.

Companion bots cannot feel desire. They cannot experience fear, courage, shame, orgasm, longing, heartbreak, tenderness or that trembling uncertainty of moving closer to another person and not knowing what happens next.

They cannot feel butterflies in the stomach. They do not even have a stomach.

That bit is really important because those fragile, awkward, uncertain experiences are not defects in human intimacy. They are what make intimacy human. They are what make us human.

We deserve more than technologies that monetise loneliness while pretending to heal it. And queer communities, in particular, deserve far better than becoming once again the place where addictive emotional technologies are tested first and governed later.

What No Algorithm Can Return

That first kiss in Singapore.

Not because I am romanticising (okay, maybe just a little!), and not because every first kiss becomes something lasting, but because I still remember what moved through me in that moment: uncertainty, courage, hesitation, electricity. I remember not knowing what would happen next. I remember the brief silence before leaning closer. I remember how human it felt to risk something and discover that another person was willing to meet me there.

That feeling matters because so much of queer life already teaches us to expect rejection before connection, silence before recognition, distance before trust. When real connection does arrive — awkwardly, imperfectly, unexpectedly — it does something profound. It reminds us that being vulnerable with another person is not weakness but part of becoming fully human.

I want young queer people today, and those growing up tomorrow, to experience that too.

I want a young man to feel that sudden electricity when he reaches for his crush’s hand, knee or neck, and doesn’t know whether or not he will return that gesture. I want a young woman to feel that same trembling uncertainty when desire and trust begin to overlap. I want trans people, queer people, all those still trying to understand themselves in a world that often asks them to shrink, to know that intimacy is not something to be programmed in advance.

Remember, the uncertainty we all experience is not the flaw. It’s the point. That fragile space between fear and connection is where humanity lives. Where we live.

I for one don’t want that humanity — that awkwardness, that courage, that possibility of being surprised by another person’s embrace — to be diminished, outsourced or commodified by systems that promise affection without risk and companionship without reciprocity.

Human intimacy does not only give pleasure and joy; it teaches courage, patience, and emotional endurance.

Some regulated artificial intelligence systems may have a place in helping people navigate loneliness, disclosure, fear or isolation. But they must never become an excuse for accepting a thinner version of human life.

Young queer people deserve more than synthetic affection calibrated for engagement and profit.

They deserve the possibility of love, desire, rejection, courage, touch, laughter, heartbreak and all the imperfect human experiences that no algorithm can genuinely return.

They deserve that first electric moment, too. And the next one, and the next one.

We all do.